Software Development Has Split Into Two Different Industries

By Scott Weiner (AI Lead at NeuEon, Inc.)

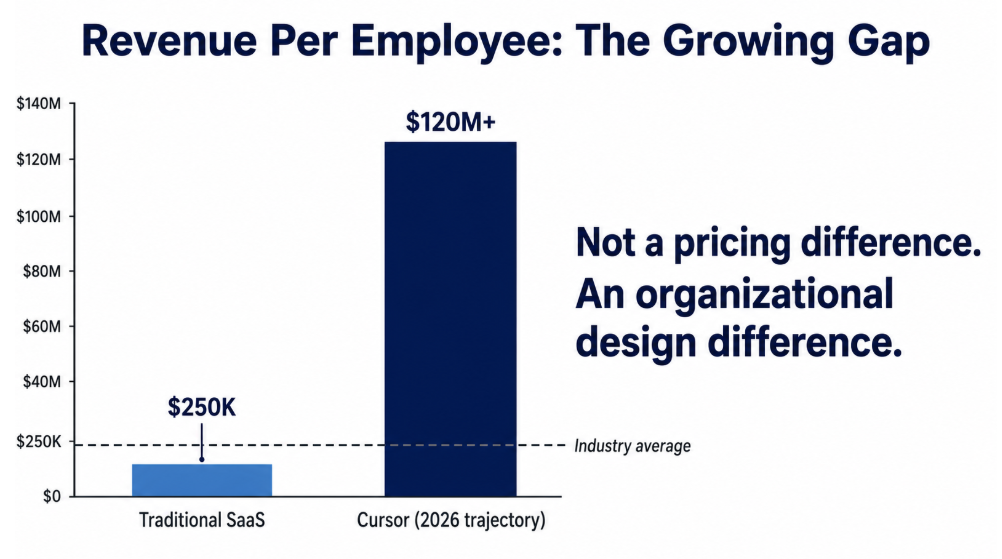

Cursor’s $2 billion funding round values the company at $50 billion. The company projects $6 billion in annual recurring revenue by the end of 2026. It employs fewer than 500 an estimated 300 people. The average SaaS company generates roughly $250,000 in revenue per employee. Cursor’s trajectory puts it at more than around 100 times that ratio. The difference is in how they build software.

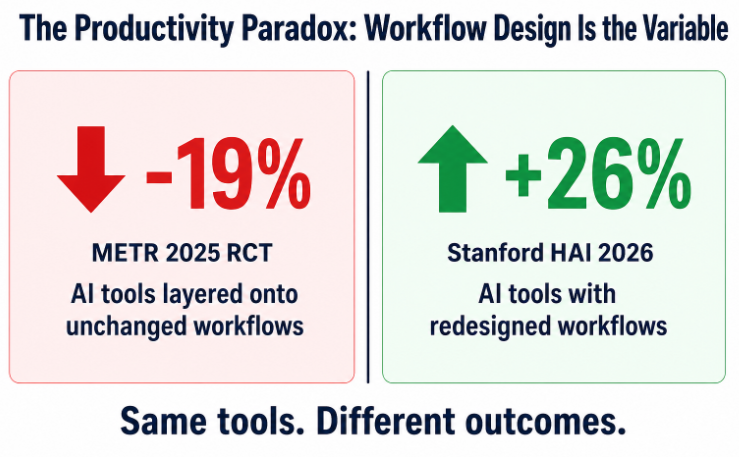

The Stanford HAI AI Index published in April 2026 documents a 26 percent productivity gain in software development from AI tools. A 2025 randomized controlled trial run by METR, an AI safety research organization, found the opposite: AI made experienced developers 19 percent slower. Both numbers are accurate. They describe different organizations. The Stanford figure captures teams that redesigned their workflows around AI’s actual capabilities. The METR figure captures what happens when AI tools get layered onto unchanged processes. After completing the METR study, participants estimated AI had improved their speed by 20 percent. They were wrong about both the direction and the magnitude.

Two realities are running simultaneously in software development. A small number of organizations are generating revenue-per-employee ratios that look like a different industry. Most software organizations are getting measurably slower while describing their AI investments as transformational. The gap is an organizational design problem, and it is accelerating.

Why the Gap Exists

Two restaurants adopt the same industrial cooking equipment. The first redesigns their menu, kitchen layout, staffing model, and ordering system around what the new equipment can actually do. The second installs it next to the existing stoves and tells their chefs to use it when it seems helpful. Three months later, the first serves twice as many covers from the same kitchen footprint. The second has higher utility bills, confused staff, and slightly faster prep on three dishes.

This describes the current state of AI tool adoption in most software organizations. The tools produce real productivity gains for teams that redesigned their workflows around the tools’ actual capabilities. They produce real productivity losses for teams that layered them onto unchanged processes.

The METR study captures the second restaurant precisely. Developers spent time evaluating AI suggestions, correcting output that looked right but was subtly wrong, context-switching between their own mental model and the model’s output, and debugging classes of errors that traditional code review processes were never designed to catch. The workflow changed. The workflow design did not. Running a more capable engine through an unchanged transmission produces friction.

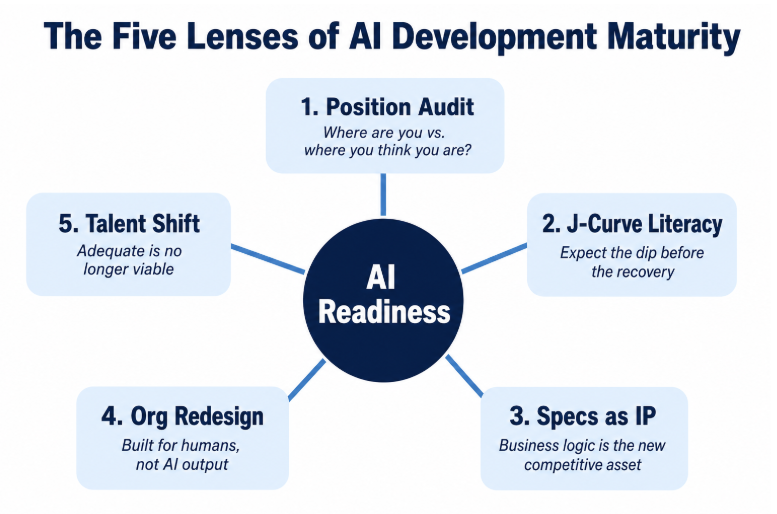

The Five Lenses

Closing this gap requires clarity on five dimensions simultaneously. Every organization needs to hold all five in view at once, because progress on one without the others produces diminishing returns.

Position Audit

Where do your velocity metrics say you actually are?

Most software organizations believe they are operating at a level of AI integration one to two levels above where their output data suggests. This mirrors every major technology adoption pattern: the announcement of the initiative gets conflated with the completion of the initiative. Leaders hear that developers are using AI tools and conclude that productivity gains are flowing.

Developers are systematically wrong about their own productivity, as the METR data shows. The reliable diagnostic is cycle time, throughput, and defect introduction rates measured before and after AI tool deployment, controlled for task complexity. Organizations that have run this measurement are frequently surprised. Those that have relied on developer self-assessment usually believe the picture is better than the output data shows.

The Stanford HAI AI Index, published this month, documents 88 percent global organizational AI adoption. That number closes the question of whether to adopt. Execution quality is now the measure, and most organizations do not yet know where they actually stand.

J-Curve Literacy

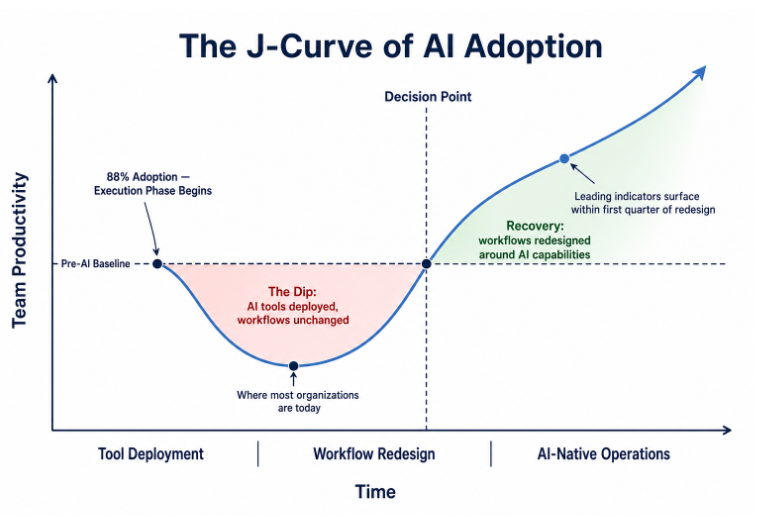

Every significant workflow transition produces a J-curve: productivity dips before it recovers. This happened in cloud migrations, ERP deployments, and Agile transformations. It is happening with AI coding tools for a predictable reason: the tools change the workflow, but the workflow design does not change to match.

With AI coding tools, the dip happens because teams are reviewing AI output line by line, debugging subtle errors that traditional quality gates were not designed to surface, and managing the cognitive overhead of integrating machine output into mental models built for human-generated code. The cost of AI-generated output gets paid without capturing the structural benefit of redesigning around it.

Most software organizations are sitting at the bottom of the J right now. Those who interpret the dip as evidence that AI does not work will wait it out at the bottom indefinitely. Those who recognize the dip as the expected outcome of partial adoption will use it to drive the workflow redesign that produces recovery. The honest timeline is months to years, though leading indicators of velocity typically surface within the first quarter of a full workflow redesign. Teams that track those indicators weekly rather than quarterly move through the dip faster. The magnitude of gain on the other side correlates directly with the depth of the redesign you are willing to undertake during the dip.

Spec Quality as the New IP

AI agents build what you describe. Where descriptions are precise, you get software that matches intent. Where descriptions have gaps, agents fill those gaps with inferences drawn from statistical patterns rather than judgment informed by customer context or organizational history. The bottleneck has moved from implementation speed to specification quality, and specification quality is a function of how deeply a team understands the system, the customer, and the problem.

Code is becoming a commodity. AI can produce it at scale, and the cost of generating it falls every quarter. The intent behind the code retains its value: the business rules, the customer logic, the edge cases that only your team understands because they lived through the decisions that created them. That institutional knowledge, made explicit in precise specifications, is your primary engineering asset. Organizations that surface and formalize it are building IP. Everyone else is hoping senior engineers do not leave.

For new development this reframe is demanding. For legacy systems it is vastly harder, and most enterprise software is legacy systems. A decade of implicit decisions is embedded in running code. The billing edge case that applies only to a specific customer segment. The service carved off the monolith during a production crisis that nobody formally documented. The configuration values three senior engineers understand and nobody else does. These systems are their own specification. No written document fully describes them because the small decisions that shaped them were never written down.

Surfacing that knowledge requires the engineers who hold institutional memory and the product people who understand actual user behavior versus documented behavior. Organizations that invest in this phase move faster later. Organizations that skip it build software that passes tests and violates actual intent.

Organizational Redesign

Software engineering organizations were designed to coordinate humans doing implementation work. Every ceremony and every role exists because humans building software in teams need coordination structures.

Stand-up meetings exist because developers on shared codebases need daily synchronization. Sprint planning exists because humans have finite working memory and need regular reprioritization. Code review exists because humans make mistakes other humans can catch. QA teams exist because developers cannot evaluate their own output objectively. These are rational responses to specific human cognitive constraints.

When AI handles an increasing share of implementation, those constraints change. Structures built around human limitations become friction. Sprint planning for a team where implementation happens in hours rather than weeks needs to look different. Code review for AI-generated output at high volume needs to look different. These structures need to change, and the immediate challenge is sequencing that change and managing the impact on people whose roles are directly tied to coordination work.

Organizations seeing 25 to 30 percent productivity gains from AI redesigned their development workflows at the process level.

Talent Recalibration

Entry-level software engineering job postings fell 25 percent year-over-year in 2024. The Stanford HAI AI Index confirms employment for software developers aged 22 to 25 declined roughly 20 percent in 2024. Goldman Sachs economists, in research published in April 2026, found AI is erasing roughly 16,000 net jobs per month across the U.S. economy, with Gen Z and entry-level workers bearing the largest share of displacement. The tasks that junior developers traditionally performed, fixing small bugs, building standard components, writing test scripts, are now handled faster and at lower cost by AI tools.

The career ladder in software engineering has functioned as an apprenticeship model. Juniors learn by doing small tasks. Seniors review their work. Over five to seven years, a junior becomes a senior through accumulated exposure to real decisions on real codebases. AI is removing the bottom rungs of that ladder at exactly the moment the skills required at the senior level are becoming more demanding. The organizations that recognize this earliest are actively redesigning how senior capability gets built: structured exposure to architecture decisions, AI-augmented code review as a teaching tool, and deliberate mentorship on specification craft rather than implementation mechanics.

The engineers who deliver distinctive value in this environment can reason about system architecture, write specifications detailed enough for autonomous agents to implement correctly, and evaluate whether shipped software actually serves the users it was built for. These capabilities separated great engineers from adequate ones before AI. Adequate is no longer a viable professional position at any seniority level, because adequate is what the models do.

Hiring patterns are shifting accordingly, toward generalists who reason across domains rather than specialists expert in a narrow technical stack. When AI handles implementation, the human value is in understanding the problem space broadly enough to direct that implementation correctly.

The Brownfield Reality

Everything above applies to new development. Most enterprise software organizations are maintaining and extending systems that have been running for years or decades, carrying real revenue and serving real users.

Legacy systems require a different starting point. The specification for such a system is incomplete. The tests, where they exist at all, cover a fraction of actual behavior. The rest runs on institutional knowledge held by long-tenure engineers.

A realistic migration path starts with using AI to accelerate development work already in progress, accepting the J-curve, and measuring what is actually happening at the output level. From there: using AI to generate specifications from existing code, building behavioral documentation of what the system actually does; then redesigning the delivery pipeline to handle AI-generated code at volume, with testing strategies and quality gates calibrated for that; then, on that foundation, beginning to shift new development toward higher levels of AI autonomy.

That path takes time. Anyone claiming otherwise is selling something. The organizations that complete it fastest are not those that deployed the most sophisticated vendor tools. They are those that invested in understanding what their systems actually do and building the specification infrastructure that autonomous development requires. For a 50-year-old insurer or a large financial institution, the goal is not to match Cursor’s revenue-per-employee ratio next year. It is to avoid being the second restaurant: the one that paid for the equipment, confused the staff, and ended up with higher utility bills and slower service.

The Harder Question

Cursor is raising $2 billion at a $50 billion valuation in April 2026. The company has estimated fewer than 50400 employees. Eighty-eight percent of organizations globally have adopted AI, according to Stanford’s 2026 AI Index. Most of them are nowhere near Cursor’s trajectory. The gap between those two facts traces back to workflow design, and the companies that close it first will operate in a different cost structure than the ones that do not.

The measure of where an organization sits on this curve is the quality of the questions it is already asking internally.

How much of the coordination infrastructure inside your software organization was built to manage constraints that no longer exist, and what would you build differently if you were designing it today?

Have your own AI transformation story? We’d love to hear it. Connect with Scott on LinkedIn or reach out to NeuEon at neueon.com/contact.

Want to learn more about fractional CAIO engagements? Contact NeuEon to discuss your AI transformation.